Why is your supplier’s “capabilities list” always inflated? Don’t be swayed by their advertised PCB Assembly Capabilities.

When selecting a PCBA supplier, we are often captivated by impressive lists

While recently studying embedded systems, I discovered a rather interesting phenomenon—many people associate process control module PCBs with large industrial automation equipment. In reality, this concept is much closer to our daily lives than we imagine.

Remember the smart fish tank I modified last year? That small thermostat contained a miniature PCB silently working inside. It reads the water temperature data every few seconds and compares it to the preset value—this process is essentially process control, just on a much smaller scale.

Once, I tried upgrading the old firmware, and the entire system crashed. That’s when I realized that the firmware running on the PCB is like the heartbeat of the entire device; if it malfunctions, even the best hardware is useless. That experience taught me a valuable lesson: hardware is the skeleton, but firmware is the soul.

Many smart home devices now use this approach. For example, that desk lamp that automatically adjusts its brightness—do you think it simply senses light? Actually, it has a small process control system inside that constantly adjusts parameters, which is far more complex than simple on/off control.

Large industrial PLC systems certainly utilize process control module PCBs to their fullest extent, but I think what’s truly interesting is how these technologies are quietly entering our daily lives. From coffee makers to air purifiers, more and more home appliances are starting to have this intelligent regulation capability.

I even think that in the future, we might see smaller and more flexible process control solutions. After all, not all devices need industrial-grade robustness; sometimes, lightweight solutions are more practical.

Have you ever encountered a similar situation? Where you discovered that an everyday item was actually much smarter than you thought?

I’ve been thinking about this process control thing lately, and I’ve noticed that many people, when they hear “PCB,” only think of the circuits on the circuit board. From an operating system perspective, the Process Control Block (PCB) is more like a notebook – every running process has one of these little notebooks, recording what it’s currently doing, where it’s going next, and what resources it has at its disposal.

I remember an interesting situation I encountered while debugging a program: a process suddenly froze. After much investigation, I discovered the problem was with the memory address recorded in its PCB – like you’ve written down the wrong house number, the system couldn’t find where it was supposed to go. This is when you truly appreciate the importance of the PCB; it’s like a temporary pass issued to everyone entering the city to conduct business, specifying where you can go and how long you can stay.

Speaking of PCB design, I find it particularly interesting how it balances detail and conciseness. Too much detail takes up too much space, while too little detail makes it easy to miss things. It’s like the bag you carry when you go out – it needs to hold all the essentials but shouldn’t be too heavy.

I’ve seen some systems with very clever PCB designs. For example, some store less frequently used information elsewhere; retrieving it only when needed. This saves space without affecting efficiency. This reminds me of the old-fashioned phone books I used to use, with frequently used numbers at the front and less frequently used ones at the back.

Many modern systems manage processes by dividing the PCB into several parts. It’s a bit like dividing a person’s file into several folders: basic information, work records, health status, etc. You only retrieve the part you need, instead of having to access the entire file every time.

It’s quite amazing to think that such a small data structure can keep the entire system running smoothly. It’s like the dispatch slips given to each vehicle in a traffic control center; although inconspicuous, without them, the entire traffic system would collapse. Every time I see so many processes in the system, each busy with its own tasks without interfering with each other, I realize what a remarkable design this little PCB is.

While debugging an embedded system recently, I suddenly realized something: those seemingly tedious hardware parameters are actually very similar to human states. For example, memory is like our short-term memory, with limited capacity but fast access speed; while the PCB is more like the human skeletal structure, providing stable support while flexibly adapting to environmental changes.

I remember once during a high-temperature test, a circuit board overheated due to uneven solder mask thickness. This makes me think that many system failures are not due to core algorithm problems but rather flaws in the basic manufacturing process. It’s like we always focus on software optimization, but neglect the actual operating environment of the process control block.

There’s a very interesting phenomenon: many engineers now excessively pursue chip performance metrics, but neglect the most basic connection reliability. I’ve handled many repaired devices, and cases of insufficient processor computing power are rare; instead, basic manufacturing issues like PCB plating wear and poor memory contact are more common.

Thickness is a parameter that is particularly easy to underestimate. I once used an industrial module from a certain brand, whose gold plating thickness was 30% more than the standard. Even after ten years and three times the expected number of insertions and removals, it still maintained good contact. This kind of attention to detail is what truly tests a manufacturer’s capabilities.

The testing phase is where the truth is revealed. I’m used to doing vibration testing at the prototype stage—not just simple power-on checks, but simulating continuous vibration under real-world conditions. Often, this is where hidden state transition vulnerabilities in the process control block are discovered, which are completely undetectable during static testing.

Many teams now spend a lot of energy on algorithm optimization, which is certainly important. But in my experience, at least 30% of field failures stem from fundamental hardware issues. For example, seemingly low-level bugs like memory address mapping errors often only manifest themselves at specific temperature thresholds.

In a project I’m currently working on, we’ve significantly strengthened the PCB material selection standards. It’s not about blindly pursuing high-end materials.

When designing industrial control systems, I’ve found that what truly affects stability is often not the sophisticated features, but the attention to detail at the fundamental level. I remember once debugging equipment in the field; the program logic was fine, but the equipment kept malfunctioning intermittently. Later, we discovered that a sensor’s signal wire was running alongside a motor cable, and electromagnetic interference completely overwhelmed the signal. This made me realize that isolation measures in hardware design should never be just theoretical.

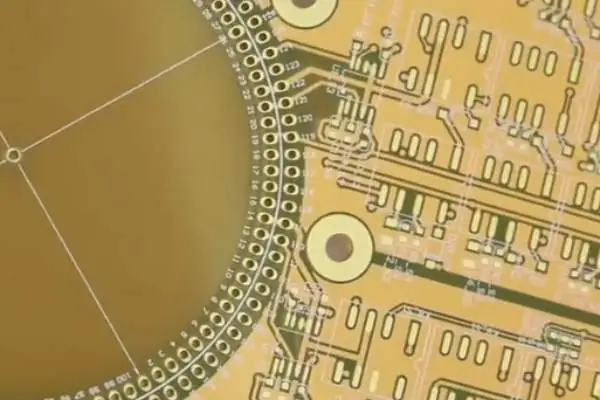

Physical separation of different functional areas should be considered during the PCB layout stage. For example, high-frequency digital circuits and analog signal acquisition modules should be kept as far apart as possible. This is not simply a matter of drawing a dividing line; it requires planning the wiring paths in conjunction with the chassis structure. Sometimes, it’s worth adding a few extra centimeters of wire length to avoid interference sources. When discussing the design principles of a process control block (PCB), many people first consider component selection, but I prefer to start with the system architecture, clarifying the data flow between different functional modules. This allows me to anticipate which parts need enhanced isolation and which signals require special handling when designing the circuit board.

Noise suppression in the power supply section is often underestimated. During one test, we found that the system experienced data fluctuations at the moment the motor started. Adding a three-stage filter at the power input solved the problem. Now, I always leave a 30% margin for the power supply and always perform noise spectrum analysis.

Regarding the selection of isolation technologies, I believe we shouldn’t blindly pursue high specifications. While optocoupler isolation is reliable, its response speed is limited, while capacitive isolation chips are more advantageous in high-speed scenarios. The key is to consider the specific application requirements. Once, to reduce costs, we replaced a magnetic coupling isolation scheme with capacitive isolation, but this resulted in false triggers in high-temperature environments – a failure to adequately consider environmental factors.

Many engineers mechanically apply standard practices when it comes to component derating, but I think it’s necessary to adjust based on the actual operating environment. For example, in a well-ventilated control cabinet, the expected lifespan of capacitors can be more optimistic than in a confined space, but this requires accumulating sufficient field data to support it.

Recently, when designing remote I/O modules, I’ve been particularly focused on maintainability. For example, adding test points to the process control block PCB increases costs slightly, but the time saved by allowing field personnel to quickly locate faults makes it worthwhile. One customer reported that because of the reserved diagnostic interfaces, they were able to solve 90% of their field problems themselves. The value of this design is difficult to measure in terms of BOM cost.

In terms of thermal management, I habitually use thermal imaging cameras to verify simulation results. Once, I found that the measured temperature of a chip was 8 degrees higher than the simulation. Later, we discovered that the copper filling rate of the heat dissipation vias was insufficient. Now, when doing thermal design, I require the board manufacturer to provide process parameters. These details often determine the product’s performance in harsh environments.

Ultimately, the core of industrial-grade design is not about stacking high-end components, but about considering the complexity of real-world operating conditions at every stage. It’s like building with blocks; even if individual blocks are exquisite, if the connecting structure is unreliable, the entire system will still collapse.

I’ve always felt that many people misunderstand the small components in servers. While everyone focuses on the big components like CPUs, memory, and hard drives, they often overlook the unsung heroes that truly support the stability of the entire system – such as the process control block (PCB). This thing sounds quite technical, but in simple terms, it’s just a dedicated housekeeper in the server responsible for handling miscellaneous tasks. It doesn’t participate in your business calculations, but without it, the entire system would be like a kite with a broken string.

The most vivid example I’ve seen was a troubleshooting incident I helped a friend’s company with last year. Their servers were inexplicably restarting in the middle of the night, and the logs were completely clean, offering no clues. Later, we discovered the problem was a tiny PCB module on the motherboard, about the size of a fingernail. This module was responsible for power sequencing and temperature monitoring. When it malfunctioned, the CPU would overheat without any alarm, and voltage fluctuations wouldn’t trigger power protection, forcing the entire system to rely on forced restarts to survive.

The design of this module is particularly interesting. It needs to remain functional even when the main system is completely powered off, like a backup battery in a car. Think about it: the remote management function that allows you to reinstall the operating system on a server from thousands of miles away relies on this small component working reliably even when the main system is shut down.

Moreover, the reliability requirements for these PCB modules are even more stringent than for the main system. A main CPU crashing occasionally might only affect business operations, but a problem with this control module could directly lead to hardware damage. I once disassembled an old server, and its process control board actually used military-grade capacitor components, and the heatsink area was almost as large as the CPU’s.

More and more devices are now emphasizing this clear division of labor in their design. Separating basic protection functions from the main system and entrusting them to dedicated hardware modules improves reliability and simplifies maintenance.

Next time you see those inconspicuous little boards in a server, take a closer look—they might be the most crucial guardians of the entire device!

I recently discovered an interesting phenomenon while studying industrial control systems—many people think of process control modules as being too complex. In reality, this seemingly sophisticated device is essentially just a notebook.

Imagine what you would do when handling several tasks simultaneously? You would probably use a notebook to record the progress of each task and what needs to be done next, right? That’s what a process control module does. It doesn’t require a complex structural design to function.

I remember visiting a factory once and seeing an old piece of equipment still running. The engineer told me that the machine’s controller had been in use for over ten years without any problems. I carefully examined its PCB board, and it was incredibly simple – just a few basic chips and a heatsink.

Many manufacturers now like to make controllers overly complicated, adding all sorts of redundant functions and complex algorithms, which actually makes them more prone to problems. I think the key is to let each module perform its specific function and not try to cram everything onto one PCB.

Once, a piece of equipment in our lab suddenly crashed. After troubleshooting for a long time, we found that the heatsink on a controller had come loose, causing it to overheat. This kind of problem is actually quite common but often overlooked.

Speaking of temperature, I don’t think it’s necessary to excessively pursue a wide operating temperature range unless it’s for a special environment. The temperature in most factory workshops is quite stable, so focusing on heatsink design is more practical.

Vibration problems are also exaggerated. Unless you’re mounting the equipment on an excavator, ordinary mechanical vibration has little effect on modern electronic components. Instead of spending a lot of money on vibration damping, it’s better to make the connectors more reliable.

The most reliable controller I’ve ever seen was from an old German brand. Its PCB board was so thick you could use it as a brick. Although the technology wasn’t cutting-edge, it was durable. Sometimes, simple and robust is the best option.

The current trend is to integrate all functions into one module, but I think this direction is flawed. Things that should be separate should remain separate; putting too many things on one board can lead to mutual interference.

Ultimately, a process control module is just a manager; there’s no need to think of it as something mysterious. The important thing is to let it reliably perform its basic functions and not expect it to be a superhero.

After years of hardware design, I’ve gradually realized something: truly good process control module PCBs are never built by simply piling on components. Many people think that using the best components and the most complex wiring will result in a reliable product. This is completely wrong. I’ve seen too many designs that look great on paper but constantly malfunction in practice. True stability often comes from attention to detail.

I remember once debugging a piece of equipment; every component met the specifications, but the whole system kept having problems. Later, we discovered that a few seemingly insignificant grounding points on the PCB weren’t properly implemented. This kind of problem is particularly insidious – it’s not a broken component or a broken wire, but rather signal interference. Since then, I’ve paid special attention to those “invisible” design aspects on the PCB. For example, the isolation method of power and signal lines, and the layout strategy for different frequency modules. These details truly determine the reliability of the entire module.

A good PCB design is like a symphony. Each part must fulfill its specific function while also working in coordination with others. Sometimes, for overall stability, it’s even necessary to intentionally limit the performance of a particular component. It’s like a conductor who wouldn’t let all the instruments play at their loudest simultaneously—excessive performance can become a source of instability. This sense of balance needs to be gradually understood through practical debugging.

I particularly dislike the view that simply considers a PCB as a “circuit carrier.” It’s more like the skeleton and neural network of a system. A well-designed PCB module can enable ordinary components to perform exceptionally well; conversely, a poor design can render even top-tier components unreliable. It’s like building a house—even the best building materials won’t withstand the elements if the structural design is flawed.

When working on new projects now, I spend a significant amount of time on upfront planning. Instead of rushing to draw schematics and select components, I first think carefully about the working environment the module will face and the extreme conditions it might encounter. This kind of thinking is more important than all the subsequent technical steps. Because only by understanding “why it’s designed this way” can we create truly reliable PCBs. After all, what we pursue is never the impressive specifications on paper, but stable performance in actual operation.

While recently debugging an old industrial control system, I discovered an interesting phenomenon. The most crucial component in that dusty control cabinet wasn’t the fancy processor chips, but a seemingly unremarkable circuit board. This board was responsible for coordinating the rhythm of the entire production line, like a conductor of an orchestra, ensuring that each piece of equipment performed the correct action at the correct time.

This module responsible for coordination reminded me of the concept of the Process Control Block (PCB) in operating systems. Although one is at the hardware level and the other at the software level, they are essentially doing the same thing—maintaining order. I remember one time when our factory’s conveyor belt suddenly went out of control because a timer in this control module malfunctioned, causing the entire production line to fail like a chain of dominoes.

Many engineers now prefer to pursue the latest processor models, but I believe that what truly determines system stability is often these inconspicuous basic modules. They are like the load-bearing walls of a building; although not flashy, they support the operation of the entire structure.

I’ve seen too many cases where the focus is placed on improving computing speed while neglecting basic control. One client once complained that their new system frequently crashed, and it was later discovered that the problem was due to flaws in the process control block (PCB) management mechanism, leading to multiple tasks competing for resources. This problem couldn’t be solved by simply replacing the CPU with a faster one.

Modern industrial environments demand increasingly high precision in control. For example, the motion control units in medical equipment must ensure that every movement is precise, and this relies on reliable hardware support. Sometimes I wonder if we are too focused on glamorous new technologies and neglecting these fundamental but crucial parts.

With the development of edge computing, these control modules are being endowed with more intelligent functions. But no matter how they evolve, their core mission remains unchanged—ensuring that every action is executed precisely and every process runs in an orderly manner. This is perhaps the true cornerstone of industrial digitalization.

When selecting a PCBA supplier, we are often captivated by impressive lists

Having worked in heavy copper PCB design for many years, I’ve observed

Many engineers mistakenly believe that dissipating heat in thick copper PCBs simply

- Expert en production de petites et moyennes séries

- Fabrication de circuits imprimés de haute précision et assemblage automatisé

- Partenaire fiable pour les projets électroniques OEM/ODM

Heures d'ouverture : (Lun-Sam) De 9:00 à 18:30