Why is your supplier’s “capabilities list” always inflated? Don’t be swayed by their advertised PCB Assembly Capabilities.

When selecting a PCBA supplier, we are often captivated by impressive lists

I recently discussed servers with a friend who does hardware design and discovered an interesting phenomenon. Many people think that choosing a server is simply about looking at the CPU model or memory size. In fact, what truly determines the performance upper limit are those seemingly insignificant components—such as the PCB board that carries everything.

I remember last year our team tested a batch of machines used for AI training. Even though the GPU models listed on the configuration sheet were the same, the actual performance varied significantly. Upon disassembly, the problem was discovered to lie in the server PCBs provided by different vendors. Some boards had meticulous wiring and excellent signal interference control, resulting in almost no latency in data transmission between GPUs. However, other boards began to exhibit stability issues under high loads.

Many companies now partner with low-priced server PCB vendors to save costs. While this may seem economical in the short term, seemingly minor design differences become magnified in the long run. Especially when your business requires massive parallel computing, a high-quality PCB can unleash the full potential of the system, while a mediocre design might not even reach 70% of the advertised performance.

The most extreme example I’ve seen is a startup that rushed to meet a project deadline by purchasing a batch of general-purpose servers, resulting in the training of the model taking almost twice as long as expected. Later, they switched to a custom PCB optimized for multi-GPU interconnects, and the overall efficiency improved by over 40%. This made me realize that hardware investment cannot be based solely on surface-level specifications.

Another easily overlooked point is that the requirements for PCBs vary drastically depending on the application scenario. For example, machines used in data centers may prioritize heat dissipation and stability, while edge computing devices need to consider factors like shock resistance and moisture protection. I previously worked on an industrial project where their servers needed to be placed in a factory workshop with fluctuating temperatures and dust issues. Ordinary designs simply couldn’t withstand this; ultimately, we had to find a supplier with specialized processes to solve the problem.

Ultimately, choosing server hardware is like assembling building blocks—every piece must fit perfectly. The PCB, as the skeleton connecting all the core components, often determines the ceiling of the entire system. It’s better to invest more effort in the underlying design from the beginning than to struggle with fixes later.

Sometimes I find the hardware industry quite interesting—the most critical aspects are often the easiest to overlook. But those who truly understand the industry know that those unseen details are where the difference lies.

Every time I see those intricate and complex internal server diagrams, I think about one question—why do the same chipsets perform so differently on machines from different manufacturers? The answer may lie hidden in those seemingly insignificant printed circuit boards.

I’ve worked with many server PCB vendors and their technicians, and I’ve noticed an interesting phenomenon—the real performance ceiling isn’t determined by the most visible motherboard, but rather by the small and medium-sized boards responsible for signal transmission. A friend who works in data center operations complained to me about a batch of domestically produced servers they purchased that consistently experienced inexplicable signal delays. Upon disassembly, they discovered that a micrometer-level misalignment between layers on a certain auxiliary board caused phase distortion in high-frequency signals as they traversed different media.

This minute error might be imperceptible on a regular office computer, but in a cloud environment that needs to handle massive amounts of requests simultaneously, it becomes a fatal problem. I remember once visiting a PCB foundry where their quality inspector pointed to an image on the X-ray machine and told me that high-end servers now require the alignment precision between multilayer boards to be controlled within one-third the diameter of a human hair; otherwise, high-speed signals would be like a sports car colliding and wasting energy, like a car navigating a winding alley.

Interestingly, this precision has spurred a new industrial division of labor. Some large international manufacturers outsource the production of basic PCB models to lower-cost suppliers, but insist on completing the lamination process for core high-speed boards in their own cleanrooms. I once witnessed the backplane production process of a certain brand of servers. They used a laser alignment system combined with temperature-controlled lamination equipment, performing akin to precision surgery on a circuit board. Even the airflow speed in the workshop was monitored in real time.

From a user’s perspective, we don’t need to know the specific parameters of back-drilling depth or electroplating filling. However, when you find that servers with the same configuration can maintain linear growth when handling high-concurrency tasks, while others encounter performance bottlenecks early on, this is often due to different understandings of basic processes by PCB suppliers. Like building with blocks, even if all the blocks meet the standard dimensions, if the tenon and mortise joints are not precise enough, the entire structure will still easily sway.

Now, more and more domestic companies are beginning to pay attention to this aspect. A Shenzhen manufacturer I know has even established a dedicated signal integrity laboratory. Their engineers use vector network analyzers to repeatedly test the high-frequency characteristics of different board combinations. This meticulous attention to detail has indeed allowed their products to gradually gain a foothold in the high-end market. After all, in the era of data deluge, the copper foil tracks carrying bit signals are essentially the canals of the digital world; their smoothness directly determines the speed of computing power.

I’ve seen many people focus on the fancy features when discussing servers, but the real determinant of stability is often the PCB hidden inside the chassis. Choosing a reliable server PCB supplier is like laying the foundation for a house; the differences may not be immediately apparent, but they become glaringly obvious under high loads or continuous operation.

I remember visiting a data center once, and an engineer pointed to racks full of servers, saying that these machines are most vulnerable to backplane problems. A well-designed backplane acts like a highway overpass, ensuring smooth data flow; a poorly designed one can cause signal congestion and even crash an entire row of servers. That’s when I truly understood why established suppliers are willing to invest more in multilayer boards with 20 or more layers—every minute of downtime in a data center is a significant financial loss.

Speaking of PCB design, one detail is particularly interesting. Good suppliers use thicker copper foil in the power module area. While it may seem like just a difference in material thickness, it actually affects heat dissipation efficiency. I’ve seen some manufacturers cut corners on materials in this area to reduce costs, resulting in machines throttling after just three days of continuous operation. Conversely, boards that honestly use 2-ounce copper foil maintain stability even in high-temperature server rooms. For example, in GPU-intensive AI training servers, the thickness of the copper foil in the power supply module directly determines whether peak computing power can be sustained—like equipping an engine with thicker fuel lines to ensure stable fuel supply at high RPMs.

Recently, while helping a friend choose a server, I noticed a phenomenon: many people excessively pursue high-end CPU models while neglecting the quality of the PCBs that support these chips. Even the most powerful processors still need to communicate with memory and hard drives through circuit boards. Insufficient wiring precision or inadequate impedance control is like driving a Ferrari on a muddy road. Especially now, with the widespread adoption of PCIe 5.0, the requirements for signal integrity have risen even further. The edges of high-speed signal lines need to be mirror-smooth; any burrs will cause signal reflection, much like the snow-like noise in high-definition video transmission.

One supplier I’ve worked with impressed me; they simulate five years of continuous operation when testing their backplanes. Although customers won’t actually open the chassis to inspect these things, this meticulousness is reassuring. After all, servers aren’t fast-moving consumer goods; they need to stand the test of time. Sometimes choosing a supplier is like making friends; the differences might not be apparent in the short term, but their true worth will be revealed in critical moments. They even use thermal imaging cameras to monitor temperature distribution under different loads, ensuring that the operating temperature of each connector is within safe thresholds.

Speaking of changes in this industry, I think the most obvious is the increased emphasis on thermal design. In the past, everyone focused more on wiring density; now, the focus is on thermally conductive materials. Especially with the rise of GPU servers, localized heat generation has increased exponentially, testing how suppliers can balance wiring efficiency and heat dissipation requirements within limited space. I’ve seen some innovative approaches, such as embedding copper blocks around critical chips; although this increases manufacturing complexity, it does improve reliability in the long run. Some designs incorporate miniature cooling channels inside the PCB, allowing airflow to pass through the board itself and carry away heat.

Actually, there’s a simple way to judge PCB quality—by weight. Of course, heavier isn’t always better, but for the same size, a solidly built board will have a substantial feel. While this approach isn’t scientifically sound, it’s surprisingly useful when comparing products from different suppliers. After all, manufacturers willing to invest in basic materials usually won’t be lax in other details either. For example, a backplane of the same size using high-TG material will be about 15% heavier than a regular FR4 backplane, as this material maintains shape stability at high temperatures.

Ultimately, choosing server components is a complex process. Relying solely on parameter lists can be misleading; a multi-dimensional comparison of actual performance is crucial. Some suppliers may have impressive sample test data, but their stability drops in mass production; while some established brands may not have outstanding technical specifications, but they excel in consistent quality control. There are no perfect solutions in this industry, only the most suitable balance for specific scenarios. For example, financial trading systems prioritize microsecond-level latency, while cloud service providers focus more on power efficiency, resulting in completely different PCB design priorities.

I remember being most impressed when I first opened a server chassis by the board crammed with components. Those intricate lines reminded me of a city’s transportation network—each line has its own purpose yet must coexist harmoniously.

When choosing a server PCB supplier, I particularly value their attention to detail. Once, during testing, we discovered that a certain capacitor was unstable at high frequencies. Without hesitation, the supplier readjusted the material formula. This relentless pursuit of quality is what truly earns trust.

Many people think wiring is simply connecting wires, but it’s not that simple. I’ve seen too many cases where improper power plane design caused overall system performance fluctuations. Good design allows current to flow smoothly like water through a calm riverbed, rather than encountering obstacles everywhere.

The integrity of the ground plane is often underestimated, but it’s actually the foundation of signal strength. Once, in pursuit of a thinner board, we sacrificed the continuity of the ground plane, resulting in failing EMI tests. Adding a dedicated shielding layer solved the problem—this made me realize that sometimes seemingly redundant design elements are the most necessary.

Capacitor selection is also crucial; bigger isn’t always better. I prefer to use capacitors with different characteristics in different areas, like prescribing different diets for different age groups. Areas near chips need fast-responding miniature capacitors, while the power input requires stable solid-state capacitors.

What impresses me most is that this industry is constantly evolving. Yesterday’s perfect design might need to be scrapped and rebuilt today due to a new chip architecture. But it’s precisely this constant pushing of limits that makes this work so fascinating.

I’ve always felt that people making servers are too obsessed with fancy technical specifications. While everyone’s focused on chip performance benchmarks, few truly care about the foundation supporting those chips—how crucial that seemingly ordinary PCB board really is.

I remember visiting a data center renovation project last year. They had just replaced traditional air cooling with a liquid cooling system. Less than three months later, widespread failures occurred. Upon disassembly, it was discovered that coolant had seeped into the motherboard layers. Later, talking to the engineers, I understood the problem: the server PCB supplier was using boards with conventional manufacturing processes, completely neglecting the special protective measures needed for long-term contact with the cooling medium.

This incident made me realize a rather dangerous tendency in the industry—the belief that using the latest chips solves everything. The reality is, even the most powerful chips rely on the PCB to transmit signals and power. If the foundation isn’t solid, it’s like building a skyscraper on sand.

I’ve seen too many teams spend their entire budget on purchasing high-end chips, yet desperately try to bargain down on server PCBs. Once, while troubleshooting a friend’s company’s frequent server restarts, I discovered their cheap PCBs couldn’t even achieve a basic 6-layer structure; the power layer was as thin as paper. Such boards couldn’t support liquid cooling systems, let alone withstand normal air cooling under high loads.

Now, some manufacturers are developing integrated liquid cooling modules, directly integrating the cooling channels into the PCB layers. This design is indeed ingenious, but the requirements for the board material and manufacturing precision are hellish. Suppliers need not only circuit design expertise but also mastery of materials science and fluid mechanics—something ordinary PCB factories simply can’t handle.

Several projects I’ve recently worked on are experimenting with replacing traditional FR4 materials with copper core boards. Although the cost is more than three times higher, the improvement in thermal conductivity is significant. This is particularly suitable for scenarios requiring compact arrangement of multiple high-power chips, since heat buildup is the number one killer of server stability.

Ultimately, choosing server components shouldn’t be based solely on surface-level parameters. Sometimes, spending a little more money on things you can’t see can save you countless troubles later. After all, nobody wants their heavily invested server cluster to fail because of a poorly made circuit board, right?

I recently chatted with a friend who works in data centers and noticed something interesting—when purchasing servers, their primary concern isn’t the CPU model or memory size, but rather repeatedly verifying the PCB supplier’s qualifications. This reminded me of ten years ago when people chose servers, basically only looking at the processor’s clock speed.

The situation is completely different now. With data transmission speeds exceeding 100Gbps, the PCB is no longer just a simple circuit carrier; it’s more like the neural network of the entire system. I once saw a test report from a manufacturer showing that the same chipset paired with server PCBs from different suppliers could result in performance differences of over 15%. This made me realize that so-called high-performance servers largely depend on that green circuit board.

I remember visiting an internet company’s server room last year, and an engineer pointed to servers being replaced and told me that these devices were being phased out after only two years of operation because the PCBs suffered severe signal loss under high loads. They conducted experiments, replacing the motherboards of old servers with PCBs made of a new generation of low-loss materials. The same hardware configuration could extend the service life by three years.

Interestingly, many small and medium-sized manufacturers are still using ordinary FR-4 materials for server motherboards to save costs. While this may save money in the short term, long-term signal attenuation leads to a surge in data retransmission rates. The most extreme case I’ve seen involved a certain e-commerce platform experiencing frequent server crashes during a promotional period. The investigation revealed that PCB dielectric loss was causing excessive network card error rates.

A common misconception when choosing a server PCB supplier is that many people focus excessively on explicit parameters like layer count and trace width, neglecting more fundamental material properties. It’s like building a house and only caring about the luxurious interior, forgetting to check the foundation’s sturdiness. In fact, in high-speed signal transmission, the dielectric constant stability of the board material is more important than the routing precision.

Once, I participated in debugging a storage server that frequently crashed. Replacing three batches of memory modules didn’t solve the problem. Later, using a vector network analyzer, we discovered that a resonance phenomenon caused by a design flaw in the PCB power layer was the cause. This experience made me deeply realize that server stability is actually built on millimeter-level details.

Now, whenever I see manufacturers advertising server performance, I pay special attention to which company’s PCB solution they used. This is like judging the performance of a sports car; you can’t just look at the engine parameters, you also need to consider the matching degree of the chassis and transmission system. After all, in the era of data deluge, even the most powerful chip needs a high-quality circuit board to unleash its full potential.

I’ve always felt that many people have a misconception about high-performance computing—that it’s as if simply piling on the most expensive GPUs will solve the problem. In reality, what truly determines system stability is often those inconspicuous basic components.

I remember discovering an interesting phenomenon last year when I was helping a friend debug an AI training server. Despite using a top-of-the-line graphics card, performance remained unstable. Troubleshooting revealed a problem with a power module on the motherboard. That seemingly simple server PCB actually handles far more complex tasks than we imagine—it not only manages high-speed data interconnects between GPUs but also ensures a clean, spring-like power supply.

I’ve worked with several different server PCB suppliers, and their design philosophies differ greatly. Some prioritize ultimate signal integrity, willing to increase costs by using specialized materials; others focus more on overall heat dissipation solutions. Once, visiting their lab, I saw engineers meticulously testing different insulating layers—their dedication reminded me of Swiss watchmakers.

Many discussions about multi-GPU parallel processing focus on NVLink technology, but few consider the importance of physical connections. Like building blocks, beautiful blocks alone aren’t enough; a sturdy base is essential. The tiny circuits hidden within the PCB form the neural network of the entire system.

I once witnessed a server from a certain brand exhibit signal attenuation after prolonged high-load operation. It turned out the substrate material had undergone minute deformation at high temperatures. This detail made me realize that material selection cannot rely solely on specifications; the thermal accumulation effects of the actual operating environment must also be considered.

I think server design is a bit like brewing tea—no matter how good the tea leaves are, if the water temperature isn’t right, you won’t brew a good cup. The copper traces responsible for signal transmission are like water channels; even slight impurities or incorrect bends can affect the overall flavor.

Recently, while researching different heat dissipation solutions, I discovered a paradox: sometimes, in pursuit of better heat conduction, metal substrates are used, but this can actually interfere with high-frequency signals. This mutually restrictive relationship is particularly interesting, like designing a jacket that needs to be both warm and breathable.

What impresses me most is the speed of technological iteration in this industry. A design that seemed cutting-edge last week might be overturned by new materials this week. But the only constant is the pursuit of precision—whether it’s nanometer-level line alignment or microsecond-level signal synchronization, it all reminds us that details are the real dividing line.

I’ve always felt that many people’s understanding of servers is still limited to that dark, intricate chassis. In reality, what truly determines performance is often what we can’t see. Take PCBs, for example; the motherboards used in ordinary computers and servers are completely different.

I remember once going to a server room for maintenance and opening an old server; seeing the densely packed wiring inside was particularly striking. With the increasing prevalence of AI applications, the demands for data transmission speeds are growing exponentially. This forces server PCB suppliers to constantly overcome technological bottlenecks. An engineer I know recently complained that their 20-layer board had just entered mass production when customers started asking if they could make more complex structures.

Choosing server components is quite interesting these days. Some manufacturers opt for imported PCBs in pursuit of ultimate performance, but domestic manufacturers have made rapid progress in recent years. Especially during emergency expansions, local suppliers offer a significant advantage in response time.

Last week, a customer urgently needed to add an AI training server. We initially expected a long lead time, but it only took five days from order placement to installation.

Heat dissipation remains a hurdle. The power consumption of high-performance chips is increasingly outrageous, and traditional air cooling is barely sufficient.

I once saw them testing a liquid cooling system; the entire PCB had to be redesigned for water channels—it was like building a miniature water conservancy project inside the motherboard. Even more disruptive changes are likely in the future.

I’ve heard some labs are researching integrating optical communication modules directly into the PCB, which could reduce data latency by another order of magnitude. However, the most practical approach right now is to master existing technologies, as not all companies need to pursue top-of-the-line configurations.

Sometimes I think the most fascinating thing about this industry is its constant evolution. Today’s new standards may be obsolete tomorrow, but it’s this pressure that drives the entire industry chain to continuously break through. Perhaps in a few years, the technical problems we’re struggling with now will become part of our basic knowledge.

I recently chatted with a friend who works in data centers and realized something—we talk all the time about how fast and intelligent cloud services are, but few people pay attention to the things actually running behind the scenes. Those rows and rows of machines actually hold a lot of interesting intricacies, especially that board that houses all the computing cores.

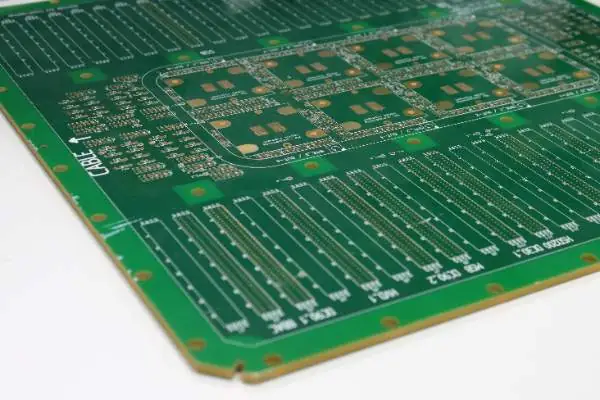

You might think it’s just a circuit board, but when you see the internal structure of a high-end server today, you’ll find that it’s no longer the green board with a few lines we remember. It’s more like a meticulously planned miniature city; every road must ensure high-speed data flow without congestion or power outages.

I remember a few years ago, when helping a friend select components, I encountered a case where their company’s original ordinary servers were constantly lagging when processing video rendering. After switching to a custom-designed solution, they discovered the problem was with the power supply lines. The ordinary board’s unstable voltage under high load directly dragged down the entire system’s performance. This experience taught me that sometimes, the latest CPU you buy at a high price might perform worse than an older configuration if it’s not paired with a suitable motherboard.

The current trend is quite obvious. Major manufacturers are customizing their own motherboards because off-the-shelf solutions often fail to meet specific needs. For example, in some scenarios, multiple GPUs need to be squeezed together, making heat dissipation and signal interference major problems. In such cases, finding a reliable supplier can optimize the wiring layer from the outset, rather than patching it afterward. I’ve seen designs that divide the power layer into several sections for independent control, allowing the CPU and peripheral chips to operate according to their needs.

Another easily overlooked aspect is heat dissipation. The dense wiring generates heat during operation. If the board’s materials can’t withstand the high temperatures, even the best chips will fail prematurely. Therefore, high-end boards now use upgraded materials, no longer the basic, half-baked components.

Ultimately, technology iterates in ways you don’t notice. We might focus more on the screen’s refresh rate, but what truly makes everything faster are often the unsung heroes hidden inside the chassis. Next time you upgrade your equipment, perhaps you should pay more attention to the PCB design, instead of just focusing on surface parameters.

Recently, I’ve noticed an interesting phenomenon—many people, when discussing server performance, tend to focus on the CPU model or memory size. However, what truly determines server stability are often those inconspicuous components. Take the server PCB, for example; the quality of this substrate directly affects the overall system efficiency. I’ve encountered numerous cases where the same chip configuration performed drastically differently on substrates of varying quality. Once, we tested two servers with identical configurations, and one of them, using a PCB from a second-tier supplier, frequently experienced signal interference under high load. This made me realize the importance of choosing a reliable server PCB supplier. For example, in data center environments, when server clusters handle a large number of concurrent requests, inferior PCBs can lead to increased data transmission error rates and even memory verification errors. These problems are often difficult to detect in routine testing and only become apparent under prolonged high-load operation.

Currently, some manufacturers cut corners on PCB materials to reduce costs. They may think users won’t open the chassis to inspect the substrate quality, but this practice will inevitably cause problems sooner or later. High-quality server PCBs should possess stable electrical performance and excellent heat dissipation characteristics, as servers need to operate 24/7. Specifically, high-end server PCBs typically use copper-clad laminate materials with high TG values (glass transition temperatures), which maintain better mechanical strength and insulation properties under high-temperature environments. Meanwhile, precise control of dielectric constant and loss factor ensures the integrity of high-frequency signal transmission.

I appreciate suppliers who pay attention to detail. They consider signal integrity during the substrate design phase and use multi-layer board structures to reduce interference. Furthermore, with increasingly stringent environmental requirements, reputable PCB manufacturers choose recyclable materials, which benefits their corporate social image. For example, some leading manufacturers place grounding layers around critical signal layers to create effective electromagnetic shielding. In material selection, they may use halogen-free flame-retardant substrates, complying with RoHS environmental standards and improving the product’s fire safety.

In the long run, investing in good server PCBs can actually save money. High-quality substrates can extend server lifespan by several years, reducing downtime losses due to failures. I’ve seen too many companies choose cheap PCBs to save a little money, only to see maintenance costs multiply several times over later. Especially in industries with extremely high system reliability requirements, such as finance and healthcare, a single business interruption caused by a PCB failure can result in millions in direct losses, not to mention the negative impact on the company’s reputation.

Speaking of future trends, I think server PCBs will increasingly focus on integration. With the rapid advancements in chip manufacturing processes, PCB substrates must keep pace. Future servers may require more complex circuit designs, posing a challenge to suppliers’ technical capabilities. For example, with the widespread adoption of high-speed interfaces like PCIe 5.0, PCBs need to achieve stricter impedance control and lower insertion loss. Simultaneously, to meet emerging demands such as AI computing, it may be necessary to integrate optical communication modules or accelerator interfaces onto the substrate.

However, it’s important to note that innovation does not equate to blindly pursuing new technologies. Some manufacturers like to pile on the latest processes, but actual application scenarios may not require such high specifications. Choosing the right server PCB requires considering actual business needs and finding the most cost-effective solution. For example, for file servers in small and medium-sized enterprises, an 8-layer PCB with standard FR-4 materials may be sufficient, rather than pursuing high-end solutions with 20 layers or more. The key is to strike a balance based on workload characteristics, expected lifespan, and total cost of ownership.

Ultimately, every component of a server is important. As the substrate connecting all components, the quality of the PCB directly affects overall performance. Next time you purchase a server, pay more attention to this seemingly ordinary component; you might discover many noteworthy details.

When selecting a PCBA supplier, we are often captivated by impressive lists

Having worked in heavy copper PCB design for many years, I’ve observed

Many engineers mistakenly believe that dissipating heat in thick copper PCBs simply

• Expert in Small-to-Medium Batch Production

• High-Precision PCB Fabrication & Automated Assembly

• Reliable Partner for OEM/ODM Electronic Projects

Business Hours: (Mon-Sat) From 9:00 To 18:30